35+ Data Engineer Interview Questions That Test Real Pipeline Experience

Data engineering interviews go beyond SQL. After building pipelines processing billions of records daily at scale, here are the questions that separate pipeline plumbers from data architects.

The data engineer role has evolved dramatically. It's no longer just about writing SQL and scheduling cron jobs. Modern data engineers build real-time streaming systems, manage petabyte-scale warehouses, and ensure data quality at every step.

These questions reflect what companies like Airbnb, Spotify, Netflix, and data-intensive startups actually ask. Master these, and you'll demonstrate you can handle data at any scale.

Core Competencies Tested

- Data Modeling: Dimensional modeling, normalization, schema design

- ETL/ELT: Pipeline design, orchestration, error handling

- Big Data: Spark, distributed computing, partitioning strategies

- SQL Mastery: Complex queries, optimization, window functions

- Data Quality: Testing, monitoring, observability

Data Modeling & SQL (Questions 1-12)

1. Explain the difference between star schema and snowflake schema.

Star Schema: Fact table at center, denormalized dimension tables directly connected. Simpler queries, faster performance, more storage.

Snowflake Schema: Dimensions are normalized into multiple tables. Less storage, more complex joins, harder to query.

In practice: Star schema is preferred for analytics—query simplicity beats storage savings.

2. What are slowly changing dimensions (SCD)? Explain Type 1, 2, and 3.

Type 1: Overwrite old value. No history kept. Use when history doesn't matter.

Type 2: Add new row with version tracking (start_date, end_date, is_current). Full history preserved.

Type 3: Add column for previous value. Limited history (only one previous value).

3. Write a SQL query to find duplicate records in a table.

-- Using GROUP BY and HAVING SELECT email, COUNT(*) as count FROM users GROUP BY email HAVING COUNT(*) > 1; -- Using window function (shows all duplicate rows) SELECT * FROM ( SELECT *, COUNT(*) OVER (PARTITION BY email) as cnt FROM users ) t WHERE cnt > 1;

4. Explain window functions. When would you use ROW_NUMBER vs RANK vs DENSE_RANK?

ROW_NUMBER: Unique sequential number (1,2,3,4). Use for pagination or deduplication.

RANK: Same value gets same rank, gaps after ties (1,2,2,4). Use for competition rankings.

DENSE_RANK: Same value gets same rank, no gaps (1,2,2,3). Use when you need consecutive ranks.

5. What's the difference between OLTP and OLAP systems?

OLTP: Transactional systems. Many small read/writes, normalized data, row-oriented storage. Examples: PostgreSQL, MySQL.

OLAP: Analytical systems. Few large reads, denormalized data, columnar storage. Examples: Snowflake, BigQuery, Redshift.

6. How would you optimize a slow SQL query?

- Check execution plan (EXPLAIN ANALYZE)

- Add appropriate indexes

- Avoid SELECT * — only select needed columns

- Filter early in the query

- Replace correlated subqueries with JOINs

- Consider partitioning for large tables

- Use appropriate data types

- Analyze table statistics

7. Explain database partitioning strategies.

Range partitioning: By date ranges—great for time-series data

List partitioning: By discrete values—regions, categories

Hash partitioning: Even distribution—when no natural partition key

Composite: Combination—e.g., range by date, then hash by user_id

8. What is data normalization? When would you denormalize?

Normalization: Organizing data to reduce redundancy (1NF, 2NF, 3NF). Good for OLTP—data integrity, less storage.

Denormalization: Intentionally adding redundancy for read performance. Good for OLAP—fewer joins, faster queries. Trade-off: data consistency complexity.

9. Write a query to calculate running total and moving average.

SELECT

date,

revenue,

SUM(revenue) OVER (ORDER BY date) as running_total,

AVG(revenue) OVER (

ORDER BY date

ROWS BETWEEN 6 PRECEDING AND CURRENT ROW

) as moving_avg_7day

FROM daily_sales;10. Explain the difference between DELETE, TRUNCATE, and DROP.

DELETE: Removes rows (can use WHERE), logged, can rollback, triggers fire. Slower.

TRUNCATE: Removes all rows, minimal logging, can't rollback, resets identity. Faster.

DROP: Removes entire table structure and data. Can't recover without backup.

11. What are CTEs? When would you use recursive CTEs?

Common Table Expressions (WITH clause) create temporary named result sets. Improve readability, allow reuse within query.

Recursive CTEs: For hierarchical data—org charts, bill of materials, tree structures.

12. How do you handle NULL values in SQL aggregations?

Most aggregations ignore NULLs (SUM, AVG, COUNT(column)). COUNT(*) counts all rows. Use COALESCE or NULLIF to handle NULLs explicitly. Be careful with AVG—NULLs aren't counted in denominator.

ETL/ELT & Data Pipelines (Questions 13-22)

13. What's the difference between ETL and ELT?

ETL: Transform before loading. Traditional approach—transform in staging area, load clean data. Good when compute is expensive at destination.

ELT: Load raw data, transform in warehouse. Modern approach—leverage powerful warehouse compute (Snowflake, BigQuery). More flexible, easier debugging.

14. How do you handle failed records in a data pipeline?

- Dead letter queue for failed records

- Log failures with context for debugging

- Alerting on failure thresholds

- Implement retry logic with backoff

- Separate bad data from good—don't block pipeline

- Root cause analysis and data quality feedback

15. Explain idempotency in data pipelines. Why is it important?

Running the same pipeline multiple times produces the same result. Critical for reliability—retries and backfills shouldn't create duplicates.

Implementation: MERGE/UPSERT instead of INSERT, partition replacement, deterministic processing, unique constraints.

16. What is data lineage and why does it matter?

Tracking data from source through transformations to final destination. Shows where data came from, how it changed, and what depends on it.

Benefits: Impact analysis, debugging, compliance (GDPR), trust in data, understanding data flow.

17. How would you design a pipeline to ingest data from multiple sources?

- Standardized ingestion layer with connectors

- Raw zone (landing) preserving source data

- Staging zone for transformations

- Curated zone for analytics-ready data

- Schema evolution handling

- Data quality checks at each stage

- Metadata management and cataloging

18. Explain Airflow DAGs. How do you handle dependencies?

DAGs (Directed Acyclic Graphs) define task dependencies—what runs before what.

Dependency patterns: Sequential (>>), parallel (lists), branching, cross-DAG dependencies (ExternalTaskSensor), trigger rules for handling upstream failures.

19. What's the difference between batch and streaming processing?

Batch: Process bounded datasets periodically. Higher throughput, easier debugging, higher latency. Good for reports, ML training.

Streaming: Process unbounded data continuously. Lower latency, more complex, exactly-once is hard. Good for real-time dashboards, fraud detection.

20. How do you ensure data quality in pipelines?

- Schema validation at ingestion

- Null checks, uniqueness constraints

- Referential integrity checks

- Statistical checks (row counts, distributions)

- Freshness monitoring

- Tools like Great Expectations, dbt tests

- Alert on anomalies

21. Explain CDC (Change Data Capture). What are its approaches?

CDC captures changes (inserts, updates, deletes) from source databases for incremental processing.

Approaches: Log-based (Debezium reads transaction logs—most reliable), timestamp-based (query modified_at—misses deletes), trigger-based (database triggers—adds overhead).

22. What is dbt and how does it fit in the data stack?

dbt (data build tool) handles the T in ELT—transforms data in the warehouse using SQL.

Features: Version-controlled transformations, testing, documentation, lineage, modular models, Jinja templating. Fits between raw data ingestion and BI tools.

Big Data & Spark (Questions 23-35)

23. Explain Spark architecture (driver, executors, cluster manager).

Driver: Runs main program, creates SparkContext, schedules tasks

Executors: Worker processes that run tasks and store data

Cluster Manager: Allocates resources (YARN, Kubernetes, Mesos, Standalone)

24. What's the difference between transformations and actions in Spark?

Transformations: Lazy operations that define computation (map, filter, join). Create new RDD/DataFrame, don't execute immediately.

Actions: Trigger execution and return results (collect, count, write). Force evaluation of transformation chain.

25. What is data skew? How do you handle it in Spark?

Skew occurs when data is unevenly distributed across partitions—some partitions have much more data, causing stragglers.

Solutions: Salting keys, broadcast joins for small tables, adaptive query execution, repartitioning, separate processing for hot keys.

26. Explain the difference between narrow and wide transformations.

Narrow: Each input partition contributes to one output partition (map, filter). No shuffle—fast.

Wide: Input partitions contribute to multiple output partitions (groupBy, join). Requires shuffle—expensive.

27. What is Spark SQL catalyst optimizer?

Catalyst optimizes query execution through: parsing → analysis → logical optimization (predicate pushdown, constant folding) → physical planning (join strategy selection) → code generation. Enables automatic query optimization.

28. When would you use broadcast joins vs shuffle joins?

Broadcast join: When one table is small enough to fit in memory on each executor. Avoids shuffle—very fast.

Shuffle join: When both tables are large. Data is shuffled by join key across cluster.

29. Explain partitioning vs bucketing in Spark/Hive.

Partitioning: Divides data into directories by column values (date, region). Good for filtering—skips irrelevant partitions.

Bucketing: Distributes data into fixed number of files by hash of column. Good for joins—co-located data reduces shuffle.

30. What are the different file formats? When would you use each?

Parquet: Columnar, compressed, schema evolution. Best for analytics.

ORC: Similar to Parquet, better for Hive. ACID support.

Avro: Row-based, schema in file. Good for streaming, schema evolution.

Delta/Iceberg: Table formats adding ACID, time travel, schema evolution to data lakes.

31. How do you debug a slow Spark job?

- Check Spark UI for stage times and task distribution

- Look for skewed partitions (some tasks much slower)

- Identify shuffles and their sizes

- Check for spill to disk

- Review join strategies—broadcast if possible

- Optimize serialization (Kryo)

- Tune memory and parallelism settings

32. Explain Kafka architecture and when you'd use it.

Components: Producers send messages to topics, topics are split into partitions, consumers read from partitions, brokers store data.

Use cases: Event streaming, log aggregation, real-time analytics, decoupling services, CDC.

33. What is exactly-once semantics? How do you achieve it?

Processing guarantees: at-most-once (may lose), at-least-once (may duplicate), exactly-once (neither lost nor duplicated).

Achieving exactly-once: Idempotent operations, transactional writes, checkpointing with deduplication, Kafka transactions + Spark structured streaming.

34. Compare data lakehouse vs data warehouse vs data lake.

Data Lake: Raw data storage (S3, HDFS). Cheap, flexible, but lacks structure/governance.

Data Warehouse: Structured, curated data. Fast queries, strong governance, expensive.

Lakehouse: Combines both—cheap storage with warehouse features (ACID, schema, performance). Delta Lake, Iceberg, Hudi.

35. What is data mesh? How does it differ from traditional data architecture?

Data mesh decentralizes data ownership to domain teams instead of central data team.

Principles: Domain ownership, data as product, self-serve platform, federated governance. Good for large organizations where central team becomes bottleneck.

Practice Data Engineering Interviews

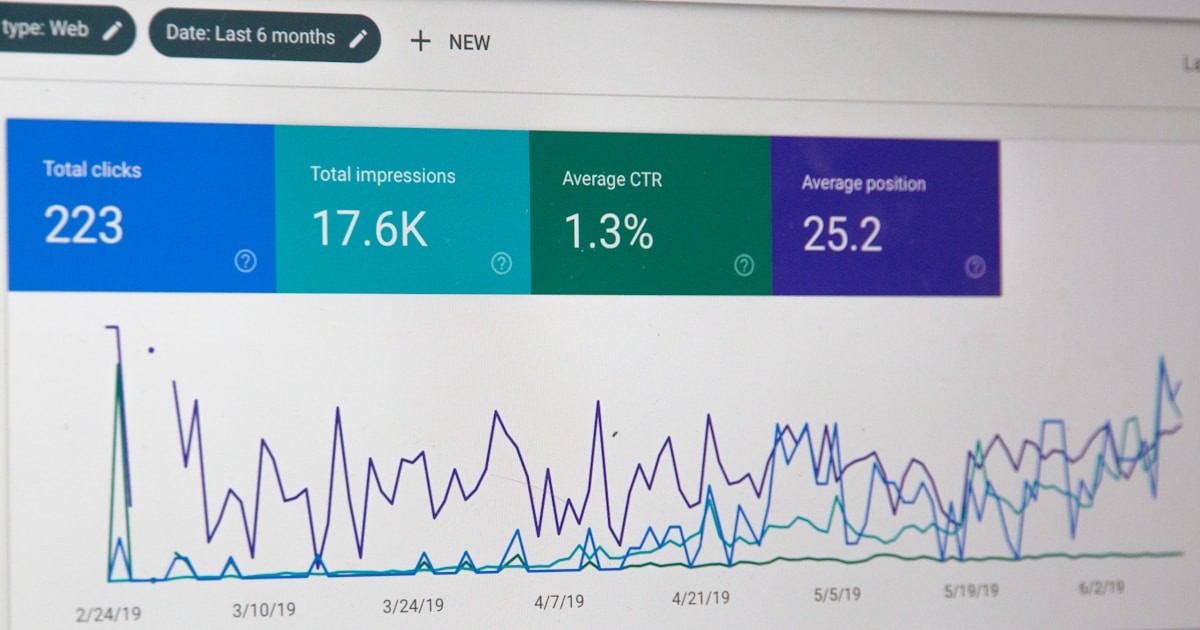

Data engineering interviews often involve SQL challenges and system design for pipelines. Many candidates practice with LastRound AI to get comfortable explaining complex architectures under interview pressure.

What Separates Great Data Engineers

❌ Red Flags

- • Can't explain why pipelines fail

- • Never mentions data quality or testing

- • Only knows one tool deeply

- • Doesn't consider scale implications

- • No understanding of costs

✓ Green Flags

- • Thinks about failure modes upfront

- • Emphasizes observability and monitoring

- • Understands trade-offs between approaches

- • Considers downstream consumers

- • Balances engineering ideals with pragmatism

Written by

Shekhar

LastRound AI

On the LastRound AI team. Writes about career advice, behavioral interviews, and how to navigate hiring at startups and big tech.

Further reading

- The Muse — STAR method guide — How to structure behavioural answers

- Tech Interview Handbook — Free, open-source interview prep

- Cracking the Coding Interview — Industry-standard prep book reference

Share this post

Related articles

Interview Q&A

50 API Developer Interview Questions & Answers 2026 (REST, GraphQL, Design) | LastRound AI

Interview Q&A

35+ Account Executive Interview Questions 2026 (Enterprise Sales) | LastRound AI

Interview Q&A

30+ Blockchain Developer Interview Questions & Answers 2026 (Smart Contracts & Web3) | LastRound AI

Interview Q&A

35+ Business Analyst Interview Questions That Actually Matter (2026 Guide) | LastRound AI