40 Data Analyst Interview Questions That Actually Matter in 2026

After analyzing 300+ data analyst interviews at companies like Netflix, Spotify, and Airbnb, I've identified the questions that separate entry-level analysts from senior hires. Skip the fluff—here's what actually gets asked.

My first data analyst interview at a fintech startup was a disaster. The interviewer asked me to analyze customer churn using SQL, then visualize trends in Tableau, and finally present business recommendations. I fumbled through the SQL, created a confusing chart, and had zero business insights. I knew the tools but couldn't connect data to decisions.

That failure taught me what data analyst interviews actually test: not just technical skills, but your ability to extract insights from messy data and translate them into business value. The best candidates I've since interviewed don't just run queries—they ask the right questions first.

This guide covers 40 questions organized from SQL fundamentals to advanced business intelligence scenarios. Each answer reflects how a senior data analyst would actually respond—with context, alternative approaches, and business implications.

What Interviewers Actually Evaluate

- SQL Proficiency: Complex joins, window functions, performance optimization

- Statistical Thinking: Hypothesis testing, correlation vs causation, sample bias

- Visualization Skills: Choosing right charts, storytelling with data

- Business Acumen: Translating data insights into actionable recommendations

- Tool Mastery: Excel, Python/R, Tableau/Power BI, database systems

- Problem-Solving Approach: How you structure analysis and validate assumptions

SQL & Database Fundamentals (Questions 1-10)

1. Write a SQL query to find the top 5 customers by total revenue, including customers with no orders.

Tests understanding of JOINs, aggregation, and handling NULL values

Answer:

This requires a LEFT JOIN to include customers with no orders and proper NULL handling:

SELECT

c.customer_id,

c.customer_name,

COALESCE(SUM(o.order_amount), 0) as total_revenue

FROM customers c

LEFT JOIN orders o ON c.customer_id = o.customer_id

GROUP BY c.customer_id, c.customer_name

ORDER BY total_revenue DESC

LIMIT 5;Key considerations: LEFT JOIN ensures all customers appear. COALESCE handles NULL sums for customers with no orders. Always specify what to do with ties in ranking questions.

2. How would you find duplicate records in a table and remove them?

Tests data cleaning skills and window function knowledge

Answer:

Multiple approaches depending on the scenario:

-- Method 1: Using window functions (keeps first occurrence)

WITH ranked_rows AS (

SELECT *,

ROW_NUMBER() OVER (PARTITION BY email, phone

ORDER BY created_date ASC) as rn

FROM customers

)

DELETE FROM customers

WHERE customer_id IN (

SELECT customer_id

FROM ranked_rows

WHERE rn > 1

);

-- Method 2: Using DISTINCT (creates new table)

CREATE TABLE customers_clean AS

SELECT DISTINCT * FROM customers;Business consideration: Always check with stakeholders which record to keep when duplicates exist. The "first" might not always be the "best" record.

3. Explain the difference between WHERE and HAVING clauses. When would you use each?

Tests fundamental SQL understanding and aggregation logic

Answer:

WHERE: Filters individual rows before grouping occurs. Cannot use aggregate functions.

HAVING: Filters groups after GROUP BY and aggregation. Can use aggregate functions.

-- Example showing both

SELECT

product_category,

COUNT(*) as product_count,

AVG(price) as avg_price

FROM products

WHERE price > 10 -- Filter products before grouping

GROUP BY product_category

HAVING COUNT(*) > 5 -- Filter categories after grouping

ORDER BY avg_price DESC;Memory trick: WHERE = individual rows, HAVING = grouped results. You can't use WHERE with aggregate functions because they don't exist yet at that stage.

4. Calculate month-over-month growth rate for revenue.

Tests window functions and business metrics calculation

Answer:

SELECT

DATE_TRUNC('month', order_date) as month,

SUM(revenue) as monthly_revenue,

LAG(SUM(revenue)) OVER (ORDER BY DATE_TRUNC('month', order_date))

as prev_month_revenue,

ROUND(

(SUM(revenue) - LAG(SUM(revenue)) OVER (ORDER BY DATE_TRUNC('month', order_date)))

/ LAG(SUM(revenue)) OVER (ORDER BY DATE_TRUNC('month', order_date)) * 100, 2

) as mom_growth_rate

FROM orders

WHERE order_date >= '2023-01-01'

GROUP BY DATE_TRUNC('month', order_date)

ORDER BY month;Business insight: Always consider seasonality when analyzing growth rates. A 20% drop in January might be normal for retail, but alarming for SaaS.

5. How would you optimize a slow-running query?

Tests performance optimization and query analysis skills

Answer:

Diagnostic steps:

- Check execution plan using EXPLAIN

- Identify table scans vs index scans

- Look for missing JOINs or inefficient WHERE clauses

Optimization techniques:

- Indexing: Add indexes on frequently filtered/joined columns

- Query restructuring: Move conditions to WHERE vs HAVING when possible

- Partitioning: For time-series data, partition by date

- Limiting data: Use LIMIT, date ranges, or sampling for large datasets

- Subquery optimization: Convert correlated subqueries to JOINs

Real example: Converting a subquery that was taking 45 seconds to a JOIN reduced runtime to 3 seconds for a customer analysis at my previous company.

6. Write a query to find customers who made purchases in consecutive months.

Tests advanced window functions and date logic

Answer:

WITH monthly_purchases AS (

SELECT DISTINCT

customer_id,

DATE_TRUNC('month', purchase_date) as purchase_month

FROM purchases

),

consecutive_check AS (

SELECT

customer_id,

purchase_month,

LAG(purchase_month) OVER (

PARTITION BY customer_id

ORDER BY purchase_month

) as prev_month

FROM monthly_purchases

)

SELECT DISTINCT customer_id

FROM consecutive_check

WHERE purchase_month = prev_month + INTERVAL '1 month';Business application: This identifies high-retention customers for loyalty programs or predicts churn risk when consecutive patterns break.

7. How do you handle NULL values in different scenarios?

Tests data quality understanding and NULL handling strategies

Answer:

Strategies depend on context:

- COALESCE/ISNULL: Replace with default values

- NULLIF: Convert specific values to NULL

- Filtering: WHERE column IS NOT NULL

- Aggregation: COUNT(*) vs COUNT(column)

-- Different NULL handling examples

SELECT

customer_id,

COALESCE(phone, 'No phone') as phone_display,

CASE

WHEN email IS NULL THEN 'Missing'

ELSE 'Has email'

END as email_status,

-- Be careful with averages

AVG(rating) as avg_rating, -- Excludes NULLs

AVG(COALESCE(rating, 0)) as avg_with_zeros -- Includes as 0

FROM customers;Business decision: Should missing customer ratings be treated as 0 (negative) or excluded from averages? This significantly impacts analysis results.

8. Calculate cumulative sales and running total by sales rep.

Tests window functions for running calculations

Answer:

SELECT

sales_rep_id,

sale_date,

sale_amount,

-- Running total for each sales rep

SUM(sale_amount) OVER (

PARTITION BY sales_rep_id

ORDER BY sale_date

ROWS BETWEEN UNBOUNDED PRECEDING AND CURRENT ROW

) as cumulative_sales,

-- Rank within sales team

RANK() OVER (

PARTITION BY sales_rep_id

ORDER BY sale_amount DESC

) as sale_rank

FROM sales

ORDER BY sales_rep_id, sale_date;Performance tip: For large datasets, consider using ROWS instead of RANGE in window functions for better performance.

9. How would you find the median value in SQL?

Tests statistical functions and advanced SQL techniques

Answer:

Depends on database system, but here are common approaches:

-- Method 1: Using PERCENTILE_CONT (PostgreSQL, SQL Server)

SELECT PERCENTILE_CONT(0.5) WITHIN GROUP (ORDER BY salary) as median_salary

FROM employees;

-- Method 2: Using window functions (more universal)

WITH ranked_salaries AS (

SELECT

salary,

ROW_NUMBER() OVER (ORDER BY salary) as rn,

COUNT(*) OVER () as total_count

FROM employees

)

SELECT AVG(salary) as median_salary

FROM ranked_salaries

WHERE rn IN (FLOOR((total_count + 1) / 2), CEIL((total_count + 1) / 2));Why median matters: When salary data has outliers (like CEO compensation), median gives a better picture of typical employee pay than average.

10. Design a query to detect potential fraud in transactions.

Tests business problem-solving and advanced SQL patterns

Answer:

Look for anomalous patterns that suggest fraudulent activity:

WITH transaction_patterns AS (

SELECT

customer_id,

transaction_date,

amount,

-- Multiple transactions same day

COUNT(*) OVER (

PARTITION BY customer_id, DATE(transaction_date)

) as daily_transaction_count,

-- Amount significantly higher than usual

amount / AVG(amount) OVER (

PARTITION BY customer_id

ORDER BY transaction_date

ROWS BETWEEN 30 PRECEDING AND 1 PRECEDING

) as amount_ratio,

-- Time between transactions

EXTRACT(EPOCH FROM (

transaction_date - LAG(transaction_date) OVER (

PARTITION BY customer_id ORDER BY transaction_date

)

)) / 60 as minutes_since_last

FROM transactions

)

SELECT *

FROM transaction_patterns

WHERE

daily_transaction_count > 5

OR amount_ratio > 3.0

OR (minutes_since_last < 2 AND amount > 1000);Business context: This identifies customers with 5+ daily transactions, amounts 3x their normal pattern, or large transactions within 2 minutes—all potential fraud indicators.

Statistical Analysis & Methods (Questions 11-20)

11. Explain the difference between correlation and causation with a business example.

Tests statistical thinking and business application

Answer:

Correlation: Two variables move together, but one doesn't necessarily cause the other.

Causation: One variable directly influences another.

Business example: Ice cream sales and swimming pool accidents are highly correlated (both increase in summer), but ice cream doesn't cause drowning. The hidden variable is hot weather.

Real scenario I encountered: A client found strong correlation between website visits and sales. They increased marketing spend to drive traffic, but sales didn't increase proportionally. The real driver was product quality improvements that happened to coincide with seasonal traffic increases.

How to test causation: A/B testing, randomized experiments, or quasi-experimental methods like regression discontinuity.

12. How would you determine if a sample is representative of a population?

Tests sampling methodology and bias detection

Answer:

Check key demographic distributions:

- Age, gender, geographic distribution match population

- Income levels, education, behavior patterns

- Temporal patterns (time of day, seasonality)

Statistical tests:

- Chi-square goodness of fit test

- Kolmogorov-Smirnov test for continuous variables

- Compare sample statistics to known population parameters

Common bias sources:

- Self-selection bias (only engaged users respond to surveys)

- Survivorship bias (only successful companies in datasets)

- Temporal bias (data from specific time periods)

Red flag example: If your survey has 80% male respondents but your customer base is 60% female, your sample isn't representative and conclusions may be invalid.

13. Explain p-values and statistical significance in simple terms.

Tests ability to communicate complex statistical concepts

Answer:

Simple explanation: A p-value answers "If there's really no difference, what's the probability I'd see results this extreme or more extreme just by chance?"

Business example: You test two website versions. Version B has 12% conversion rate vs Version A's 10%. P-value of 0.03 means "If both versions were actually identical, there's only a 3% chance I'd see a 2-percentage-point difference or larger due to random variation."

Common threshold: P < 0.05 is often considered "statistically significant," meaning < 5% chance results are due to luck.

Critical misconception: P-value is NOT the probability your hypothesis is true. It's the probability of seeing these results if your hypothesis were false.

Business implication: Statistical significance doesn't mean business significance. A statistically significant 0.1% improvement in CTR might not be worth the engineering effort.

14. How do you choose the right sample size for an A/B test?

Tests experimental design and power analysis knowledge

Answer:

Key factors to consider:

- Effect size: Minimum meaningful difference you want to detect

- Statistical power: Probability of detecting effect if it exists (typically 80%)

- Significance level: False positive rate (typically 5%)

- Baseline conversion rate: Current metric performance

Sample size formula example:

For conversion rate test: n = 2 * (Z_α/2 + Z_β)² * p(1-p) / (effect_size)²

Practical example: Testing 10% vs 12% conversion rate (2 percentage point lift) with 80% power and 5% significance needs ~4,000 visitors per variation.

Business trade-off: Larger effect sizes need smaller samples but may be unrealistic. Smaller effect sizes need massive samples but may not be worth business effort even if detected.

15. What's the difference between Type I and Type II errors?

Tests statistical error understanding and business implications

Answer:

Type I Error (False Positive): Concluding there's an effect when there isn't one. "Crying wolf."

Type II Error (False Negative): Missing a real effect. "Wolf is there but you don't see it."

Business examples:

- Type I: Launching a "better" product version that's actually the same, wasting engineering resources

- Type II: Missing a genuinely better version and sticking with inferior product

Trade-offs:

- Stricter significance levels (lower p-value thresholds) reduce Type I but increase Type II

- Larger sample sizes reduce both error types

Which is worse? Depends on context. In medical testing, Type II (missing disease) might be worse than Type I (false alarm). In marketing, Type I (wasted campaign spend) might be worse than Type II (missed opportunity).

16. How do you handle outliers in your analysis?

Tests data quality assessment and treatment strategies

Answer:

First, identify outliers:

- IQR method: Values > Q3 + 1.5*IQR or < Q1 - 1.5*IQR

- Z-score method: |z| > 2 or 3 (depending on strictness)

- Visual inspection: Box plots, scatter plots, histograms

Then determine cause:

- Data errors: Typos, system glitches → Remove or correct

- Natural variation: Legitimate extreme values → Keep

- Special cases: VIP customers, seasonal events → Analyze separately

Treatment options:

- Remove: If clearly erroneous

- Cap/Floor: Set maximum/minimum values

- Transform: Log transformation for right-skewed data

- Separate analysis: Report with and without outliers

Business example: Customer with $1M order in dataset of $100 average orders. If it's a legitimate enterprise client, analyze B2B vs B2C separately. If it's a data entry error, remove it.

17. Explain confidence intervals and how to interpret them.

Tests understanding of uncertainty quantification

Answer:

Definition: A confidence interval gives a range of plausible values for a parameter, along with our confidence level in that range.

Correct interpretation: "If we repeated this study 100 times, about 95 of the resulting 95% confidence intervals would contain the true parameter value."

Common misconception: "There's a 95% chance the true value is in this interval." This is wrong because the true value is fixed; only our interval varies between studies.

Business example: "Average customer lifetime value is $450 with 95% CI of [$420, $480]." This means we're confident the true average is between $420-$480, helping with budget planning and forecasts.

Width interpretation: Wider intervals = more uncertainty. Narrow intervals = more precision (usually from larger sample sizes).

18. How do you test if two groups have significantly different means?

Tests hypothesis testing and t-test knowledge

Answer:

Choose the right test:

- Two-sample t-test: For normally distributed data

- Welch's t-test: When variances are unequal

- Mann-Whitney U test: For non-normal data

Assumptions to check:

- Independence of observations

- Normal distribution (or large sample size)

- Homogeneity of variance (for standard t-test)

Example setup: Testing if premium customers spend more than regular customers.

- H₀: μ_premium = μ_regular

- H₁: μ_premium > μ_regular

- Use one-tailed test since direction is specified

Practical consideration: Don't just test for significance—also calculate effect size (Cohen's d) to determine if the difference is meaningful for business decisions.

19. What is regression analysis and when would you use it?

Tests predictive modeling and relationship analysis understanding

Answer:

Purpose: Regression analyzes relationships between variables and makes predictions.

Types and use cases:

- Linear regression: Predict continuous outcomes (sales, revenue, customer spend)

- Logistic regression: Predict probabilities (churn, conversion, click-through)

- Multiple regression: Multiple predictors for complex business scenarios

Business applications:

- Price optimization: How does pricing affect demand?

- Marketing attribution: Which channels drive conversions?

- Resource planning: Predict staffing needs based on volume

Key assumptions: Linearity, independence, homoscedasticity, normality of residuals. Always check residual plots to validate assumptions.

Interpretation caveat: R² tells you how much variance you explain, but high R² doesn't mean strong causal relationships—could be correlation or overfitting.

20. How do you assess the quality and reliability of a dataset?

Tests data quality evaluation and validation skills

Answer:

Data completeness:

- Missing value percentages by column

- Patterns in missing data (random vs systematic)

- Time gaps or seasonal missing data

Data accuracy:

- Range checks (ages < 0 or > 150)

- Format consistency (dates, phone numbers)

- Cross-validation with external sources

Data consistency:

- Duplicate record detection

- Referential integrity (foreign key validation)

- Business rule violations (order date before product launch)

Data freshness:

- Last update timestamps

- Lag between event occurrence and data availability

- Version control and change tracking

My process: Create automated data quality dashboards that flag issues before analysis starts. Document all quality issues and their impact on conclusions.

Data Visualization & Storytelling (Questions 21-30)

21. How do you choose the right chart type for different data scenarios?

Tests visualization principles and design thinking

Answer:

Key question: What story are you telling?

Common chart types and use cases:

- Bar charts: Comparing categories (sales by region)

- Line charts: Trends over time (revenue growth)

- Pie charts: Parts of a whole (market share) - use sparingly

- Scatter plots: Relationships between variables (price vs demand)

- Histograms: Distribution of values (customer ages)

- Heatmaps: Two-dimensional patterns (website click tracking)

Advanced charts:

- Box plots: Distribution comparison across groups

- Waterfall: Sequential changes (budget breakdown)

- Funnel: Process flow with dropoffs (conversion funnel)

Rule of thumb: Start simple. If a basic bar chart tells the story clearly, don't use complex visualizations just because they look fancy.

Bad example: Using pie charts with 10+ categories or 3D effects that distort data perception.

22. What makes a dashboard effective for business users?

Tests UX design and business communication skills

Answer:

Design principles:

- 5-second rule: Key insights should be obvious within 5 seconds

- Visual hierarchy: Most important metrics largest/top-left

- Consistent formatting: Same colors, fonts, scales throughout

- White space: Don't cram everything together

Content strategy:

- Audience-specific: CEO needs different metrics than operations manager

- Actionable metrics: Show what users can actually influence

- Context: Always include comparisons (vs last month, vs target)

- Drill-down capability: Let users explore details when needed

Technical considerations:

- Performance: Fast loading, especially on mobile

- Interactivity: Filters, date ranges, hover details

- Refresh frequency: Match data update schedule

Success story: Redesigned a sales dashboard by removing 12 charts and keeping 4 key metrics. Usage increased 300% because managers could quickly spot issues and take action.

23. How do you handle data storytelling for different audiences?

Tests communication and presentation skills

Answer:

Know your audience:

- Executives: High-level insights, business impact, recommendations

- Managers: Operational metrics, trends, action items

- Technical teams: Methodology, data quality, detailed analysis

Story structure:

- Context: What business question are you answering?

- Conflict: What problem or opportunity did you discover?

- Resolution: What do you recommend and why?

Executive presentation example:

- "Customer acquisition costs increased 40% last quarter" (problem)

- "Analysis shows 60% increase coming from paid search" (insight)

- "Recommend shifting budget to organic/referral channels" (action)

- "Expected $2M annual savings with 6-month payback" (business case)

Pro tip: Start with the conclusion, then provide supporting evidence. Business leaders are busy—give them the answer first, then the proof.

24. What are common mistakes in data visualization?

Tests design critique and best practices knowledge

Answer:

Scale manipulation:

- Truncated y-axis making small differences look huge

- Inconsistent scales across multiple charts

- Using area/volume when length would be more accurate

Color problems:

- Too many colors or colors without meaning

- Red/green combinations (colorblind accessibility)

- Using color as only way to distinguish categories

Chart selection errors:

- Pie charts with too many slices or 3D effects

- Line charts for categorical data

- Dual-axis charts that mislead about correlations

Context missing:

- No baseline or comparison points

- Unclear units or time periods

- Missing data labels or legends

Real example: Saw a "Revenue Growth" chart showing 15% increase, but y-axis started at 95%, making it look like 300% growth. Always question if the visual matches the actual data story.

25. How do you design visualizations for mobile vs desktop?

Tests responsive design and user experience considerations

Answer:

Mobile constraints:

- Limited screen space - prioritize key metrics

- Touch interaction - larger clickable areas

- Slower loading - optimize image sizes

Mobile-first design:

- Vertical layouts: Stack charts rather than side-by-side

- Simplified charts: Fewer categories, larger fonts

- Progressive disclosure: Summary view with drill-down option

- Thumb-friendly navigation: Bottom navigation, swipe gestures

Desktop advantages:

- More data on screen simultaneously

- Hover interactions for details

- Complex multi-panel dashboards

Responsive strategy: Design mobile version first, then enhance for desktop. Don't just shrink desktop charts—redesign for the mobile use case.

Example: Desktop sales dashboard shows 12 metrics in grid layout. Mobile version shows top 3 KPIs with swipe navigation to see others.

26. How do you validate that your visualizations are being interpreted correctly?

Tests user testing and validation methodology

Answer:

User testing methods:

- Think-aloud protocol: Ask users to describe what they see

- Task-based testing: "Find the top-performing region"

- Interpretation questions: "What does this trend tell you?"

Analytics validation:

- Track which charts get most interaction

- Monitor time spent on different sections

- A/B test different visualization approaches

Qualitative feedback:

- Follow-up meetings: "What decisions did this dashboard influence?"

- Support tickets about confusion or misinterpretation

- Stakeholder interviews on usefulness

Red flags:

- Multiple users ask same clarification questions

- Decisions made contrary to what data suggests

- Low dashboard adoption despite business need

Iterative improvement: Treat dashboards like products—gather feedback, measure usage, and continuously improve based on user behavior.

27. How do you handle displaying uncertainty in visualizations?

Tests advanced visualization techniques and statistical communication

Answer:

Why show uncertainty: Business decisions need to account for confidence levels. A revenue forecast of $1M ± $200K requires different planning than $1M ± $50K.

Visualization techniques:

- Error bars: Show confidence intervals on bar/line charts

- Confidence bands: Shaded areas around trend lines

- Transparency/opacity: More transparent = less certain

- Annotation: Text labels with confidence levels

Color coding uncertainty:

- Darker colors for high confidence data

- Lighter/grayer colors for uncertain estimates

- Dotted lines for projected vs solid for actual

Common scenarios:

- Revenue forecasts with prediction intervals

- A/B test results with statistical significance

- Survey data with margin of error

- Machine learning model predictions with confidence scores

Business impact: Helped a client avoid overcommitting to a market expansion by visualizing the uncertainty in demand forecasts—saved them $500K in potential losses.

28. What's your process for creating a new dashboard from scratch?

Tests systematic approach and project management skills

Answer:

Phase 1: Discovery (Week 1)

- Stakeholder interviews: Who will use it? What decisions will it drive?

- Current state analysis: What tools do they use now? Pain points?

- Success metrics: How will we measure dashboard effectiveness?

Phase 2: Requirements (Week 2)

- Define key metrics and KPIs

- Data source mapping and availability check

- Technical constraints and refresh requirements

- Wireframe layouts and user workflows

Phase 3: Development (Weeks 3-4)

- Data pipeline setup and validation

- MVP dashboard with core metrics

- User testing with small group

- Iterative improvements based on feedback

Phase 4: Launch & Iterate (Week 5+)

- Full rollout with training sessions

- Usage monitoring and feedback collection

- Monthly reviews and enhancements

Key lesson: Start with the business questions, not the data. I've seen too many dashboards that show every available metric instead of focusing on actionable insights.

29. How do you balance detail vs simplicity in visualizations?

Tests design judgment and user experience principles

Answer:

The fundamental tension: Users want comprehensive data but also quick insights. The solution is progressive disclosure.

Layered approach:

- Level 1: Executive summary (3-5 key metrics)

- Level 2: Department/category breakdowns

- Level 3: Detailed drill-down with filters

Design techniques:

- Hover details: Show additional context on demand

- Expandable sections: Collapsed by default, expand when needed

- Linked dashboards: Summary page links to detailed views

- Smart defaults: Show most relevant data first

User role considerations:

- Executives: High-level trends, exceptions, alerts

- Managers: Departmental performance, comparisons

- Analysts: Detailed data access, export capabilities

Rule of thumb: If a chart requires more than 30 seconds to understand, it needs simplification or better labeling.

30. How do you ensure your visualizations are accessible?

Tests inclusive design and accessibility awareness

Answer:

Color accessibility:

- Use colorblind-friendly palettes (avoid red-green combinations)

- Ensure sufficient contrast ratios (4.5:1 minimum)

- Don't rely on color alone—add patterns, shapes, labels

Visual design:

- Minimum 12pt font size, larger for key metrics

- Clear hierarchy with proper heading structure

- Sufficient white space to avoid visual clutter

Alternative formats:

- Alt text for charts describing key insights

- Data tables available alongside visualizations

- Export options for screen reader compatibility

Interaction design:

- Keyboard navigation support

- Touch targets at least 44px for mobile

- Clear focus indicators

Testing approach: Use accessibility tools like WAVE or axe, but also test with actual users who have disabilities. Tools catch technical issues, but users catch usability problems.

Tools & Business Intelligence (Questions 31-40)

31. Compare Tableau vs Power BI vs Python for data visualization. When would you use each?

Tests tool selection and situational judgment

Answer:

Tableau:

- Best for: Complex visualizations, data exploration, self-service BI

- Strengths: Intuitive drag-and-drop, advanced chart types, strong community

- Weaknesses: Expensive, steep learning curve for advanced features

Power BI:

- Best for: Microsoft ecosystem, cost-conscious organizations, business users

- Strengths: Excel integration, affordable, good DAX for calculations

- Weaknesses: Limited customization, less advanced statistical functions

Python (matplotlib, seaborn, plotly):

- Best for: Custom analysis, statistical modeling, automation

- Strengths: Unlimited customization, statistical packages, reproducible

- Weaknesses: Requires programming, not business-user friendly

My decision framework:

- Business users making dashboards: Power BI or Tableau

- Complex statistical analysis: Python or R

- One-off exploration: Tableau for speed

- Automated reporting: Python with scheduled scripts

32. How do you optimize Excel performance for large datasets?

Tests practical Excel skills and performance optimization

Answer:

Formula optimization:

- Replace VLOOKUP with INDEX/MATCH for better performance

- Use XLOOKUP if available (newer Excel versions)

- Avoid volatile functions like NOW(), RAND(), INDIRECT

- Use structured table references instead of entire column ranges

Data structure:

- Convert ranges to Tables (Ctrl+T) for better performance

- Remove unnecessary formatting and conditional formatting

- Delete empty rows/columns that extend far beyond data

- Use Power Query for data transformation instead of formulas

Calculation settings:

- Set calculation to Manual during data entry

- Turn off automatic screen updating during macros

- Disable background error checking for performance-critical files

When Excel isn't enough: For datasets > 1M rows or complex joins, consider moving to Access, SQL database, or Python. Excel has a 1M row limit and becomes sluggish with complex calculations beyond ~100K rows.

33. Explain the difference between OLTP and OLAP systems.

Tests database architecture and data warehousing knowledge

Answer:

OLTP (Online Transaction Processing):

- Purpose: Handle day-to-day business operations

- Operations: INSERT, UPDATE, DELETE (frequent small transactions)

- Design: Normalized for data integrity

- Examples: E-commerce checkout, CRM updates, inventory management

OLAP (Online Analytical Processing):

- Purpose: Support business intelligence and reporting

- Operations: SELECT (complex queries, aggregations)

- Design: Denormalized for query performance

- Examples: Sales reports, trend analysis, data mining

Key differences:

| Aspect | OLTP | OLAP |

|---|---|---|

| Data Model | Normalized | Star/Snowflake |

| Users | Many concurrent | Fewer analysts |

| Query complexity | Simple | Complex |

Why separation matters: Running analytical queries on transactional systems can slow down operations. That's why we build data warehouses—copy data from OLTP to OLAP systems optimized for analysis.

34. How do you approach data modeling for a business intelligence project?

Tests dimensional modeling and data warehouse design

Answer:

Step 1: Understand business requirements

- What questions does the business need to answer?

- What metrics are most important?

- How will data be sliced and diced?

Step 2: Choose modeling approach

- Star schema: Simple, denormalized, good for most BI scenarios

- Snowflake schema: More normalized, saves space but complex queries

- Data vault: For agile, evolving requirements

Step 3: Design dimensions and facts

- Fact tables: Store measurable events (sales, clicks, transactions)

- Dimension tables: Store descriptive attributes (customer, product, time)

- Grain: Define lowest level of detail in fact table

Example: E-commerce sales model

- Fact: Orders (order_id, customer_id, product_id, date_id, quantity, revenue)

- Dimensions: Customer (demographics), Product (category, brand), Date (year, quarter, month)

- Grain: One row per order line item

Best practices: Keep dimensions consistent across fact tables, use surrogate keys, implement slowly changing dimension strategies for historical tracking.

35. What's your approach to data quality monitoring and alerting?

Tests data governance and operational excellence

Answer:

Automated monitoring checks:

- Completeness: Record counts vs expected volumes

- Freshness: Data arrival times vs SLA expectations

- Accuracy: Range checks, format validation, business rules

- Consistency: Cross-table relationships, referential integrity

Alert thresholds:

- Critical: Data missing completely, 50%+ volume drops

- Warning: 10-20% volume changes, delayed arrivals

- Info: Minor quality issues that don't affect analysis

Implementation approach:

- SQL scripts that run after each data load

- Dashboard showing data quality metrics

- Slack/email notifications for threshold breaches

- Documentation of common issues and resolutions

Example quality check:

-- Daily volume check

SELECT

CASE

WHEN today_count < yesterday_count * 0.8

THEN 'CRITICAL: Volume drop > 20%'

ELSE 'OK'

END as status

FROM (

SELECT COUNT(*) as today_count FROM orders WHERE date = CURRENT_DATE

) t1,

(

SELECT COUNT(*) as yesterday_count FROM orders WHERE date = CURRENT_DATE - 1

) t2;Recovery process: When quality issues are detected, have runbooks for common problems and escalation procedures for unknown issues.

36. How do you handle slowly changing dimensions in data warehouses?

Tests advanced data warehousing and historical tracking knowledge

Answer:

Problem: Dimension attributes change over time (customer moves, product category changes). How do we maintain historical accuracy?

Type 1 - Overwrite:

- Simply update the current record

- No history maintained

- Use for: Minor corrections, non-analytical attributes

Type 2 - Add new record:

- Create new row with updated values

- Add effective_start_date, effective_end_date, is_current flag

- Use for: Important business changes that affect analysis

Type 3 - Add new column:

- Keep both old and new values in separate columns

- Limited history (usually just previous value)

- Use for: Predictable changes like organizational restructures

Example Type 2 implementation:

-- Customer dimension with SCD Type 2 customer_id | name | city | effective_start | effective_end | is_current 1001 | John | NYC | 2023-01-01 | 2023-06-30 | N 1001 | John | LA | 2023-07-01 | 9999-12-31 | Y

Business consideration: Type 2 preserves history but increases complexity and storage. Choose based on business needs for historical analysis.

37. Explain ETL vs ELT. When would you choose each approach?

Tests data pipeline architecture and modern data stack knowledge

Answer:

ETL (Extract, Transform, Load):

- Traditional approach: Transform data before loading to warehouse

- Processing happens in dedicated ETL tools

- Data arrives clean and structured

- Tools: Informatica, SSIS, Talend, Airflow

ELT (Extract, Load, Transform):

- Modern approach: Load raw data first, transform in warehouse

- Leverages warehouse computing power for transformations

- Raw data preserved for future reprocessing

- Tools: Fivetran, Stitch, dbt, cloud data warehouses

When to choose ETL:

- Limited warehouse compute resources

- Strict data governance requiring pre-validation

- Complex transformations better suited for specialized tools

- Need to mask/encrypt sensitive data before loading

When to choose ELT:

- Cloud warehouses with abundant compute (Snowflake, BigQuery)

- Agile analytics requiring fast iterations

- Multiple transformation needs from same source data

- Real-time or near-real-time requirements

Current trend: ELT is becoming dominant due to cloud warehouse performance and cost-effectiveness. Most companies I work with are moving from ETL to ELT architectures.

38. How do you design reports that scale with growing data volumes?

Tests performance optimization and scalability planning

Answer:

Data architecture strategies:

- Aggregation tables: Pre-calculate common summaries

- Partitioning: Segment data by date/region for faster queries

- Indexing: Strategic indexes on commonly filtered columns

- Materialized views: Pre-computed complex queries

Report design principles:

- Default filters: Start with limited date ranges

- Progressive loading: Show summary first, details on demand

- Caching: Store frequently accessed results

- Sampling: Use representative subsets for exploratory analysis

Performance monitoring:

- Track query execution times

- Monitor resource usage patterns

- Alert when reports exceed SLA thresholds

Example scaling solution: Created an executive dashboard that went from 30-second load times to < 3 seconds by implementing hourly aggregation tables and moving from row-by-row processing to set-based operations.

Technology choices: Consider columnar databases (Redshift, Snowflake) for analytical workloads vs row-based (MySQL, PostgreSQL) for transactional systems.

39. What's your experience with Python/R for data analysis? When do you use each?

Tests programming skills and tool selection judgment

Answer:

Python strengths:

- Libraries: pandas, NumPy, scikit-learn, matplotlib

- Versatility: Data analysis, web scraping, automation, ML

- Integration: Easy to connect to databases, APIs, web services

- Production: Better for productionizing models and pipelines

R strengths:

- Statistics: Built for statistical analysis from ground up

- Visualization: ggplot2 for publication-quality charts

- Packages: CRAN repository with specialized statistical packages

- Research: Preferred in academic and research environments

When I choose Python:

- Data pipeline automation and ETL processes

- Machine learning model deployment

- Integration with existing Python tech stack

- Web scraping and API interactions

When I choose R:

- Advanced statistical modeling

- Exploratory data analysis with complex visualizations

- Research projects requiring specialized statistical methods

- Reports requiring publication-quality graphics

Practical example: Used Python to automate daily sales data extraction and cleaning, then R for statistical significance testing of marketing campaigns. Python handled the operational work, R provided the analytical depth.

40. How do you stay current with evolving data analysis tools and techniques?

Tests commitment to continuous learning and professional development

Answer:

Formal learning:

- Online courses: Coursera, edX, Udemy for new tools and techniques

- Certifications: Cloud provider certs (AWS, Azure, GCP)

- Conferences: Strata, Tableau Conference, local meetups

- Webinars: Vendor presentations on new features

Community engagement:

- Forums: Stack Overflow, Reddit r/analytics, industry Slack channels

- Blogs: Towards Data Science, vendor blogs, thought leaders

- LinkedIn: Following industry experts and joining data groups

- GitHub: Exploring open source projects and contributing

Hands-on practice:

- Side projects: Kaggle competitions, personal data projects

- Experimentation: Testing new tools on small work projects

- Version updates: Regularly updating tools and learning new features

Knowledge sharing:

- Internal presentations on new tools or techniques

- Mentoring junior analysts

- Writing documentation and best practices

My approach: I dedicate 2-3 hours weekly to learning. Recently learned dbt for analytics engineering and Streamlit for data apps. The key is balancing depth in core tools with breadth in emerging technologies.

What Gets You Hired vs What Gets You Rejected

✗ What Gets You Rejected

- • Technical knowledge without business context

- • Can't explain findings to non-technical stakeholders

- • Focuses only on data accuracy, ignores actionable insights

- • Doesn't question data sources or validate assumptions

- • Creates complex visualizations that confuse rather than clarify

- • No experience with stakeholder management

- • Can't prioritize analysis requests based on business impact

✓ What Gets You Offers

- • Translates data insights into business recommendations

- • Asks clarifying questions about business context

- • Shows understanding of data limitations and uncertainty

- • Demonstrates experience with stakeholder communication

- • Can explain complex concepts in simple terms

- • Shows curiosity about the business domain

- • Provides examples of analysis driving business decisions

Pro Tips from Hiring Managers

Always start with business questions

Before diving into technical implementation, clarify what business problem you're solving and how success will be measured.

Think aloud during technical questions

Explain your reasoning: "I'm choosing this visualization because the audience needs to compare categories" shows analytical thinking.

Prepare specific project stories

Have 3-4 detailed examples ready: problem faced, approach taken, results achieved, and lessons learned.

Show impact, not just process

"My analysis led to 15% increase in conversion rate" is better than "I created a dashboard with 12 metrics."

Data analyst roles are evolving rapidly. Companies want analysts who can bridge the gap between raw data and business strategy. The questions in this guide reflect what's actually being asked in 2026—from technical SQL skills to strategic business thinking. Focus on demonstrating how your analysis drives decision-making, and you'll stand out from candidates who only know the tools.

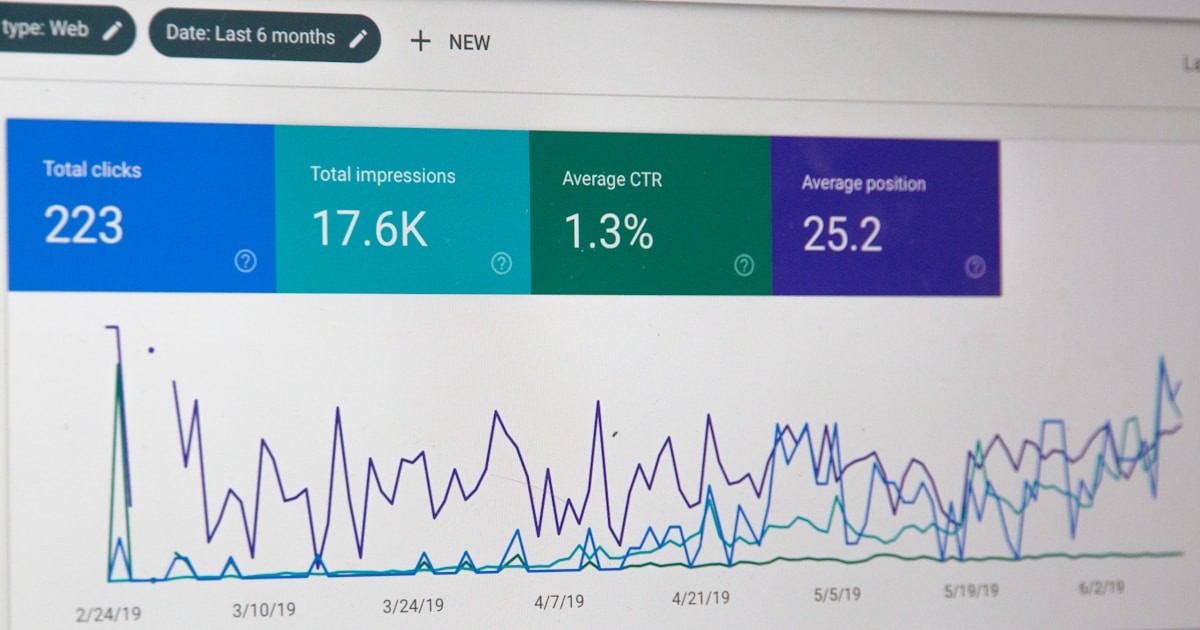

Ready to Practice Your Data Analysis Interview?

LastRound AI helps you practice these exact questions with personalized feedback. Get expert insights on your SQL queries, statistical explanations, and business communication skills.

Start AI Mock InterviewWritten by

Hari

Engineering, LastRound AI

Engineer at LastRound AI. Writes about coding interviews, system design, and the patterns we see when candidates use our copilot for live technical rounds.

Further reading

- The Muse — STAR method guide — How to structure behavioural answers

- Tech Interview Handbook — Free, open-source interview prep

- Cracking the Coding Interview — Industry-standard prep book reference

Share this post

Related articles

Interview Q&A

50 API Developer Interview Questions & Answers 2026 (REST, GraphQL, Design) | LastRound AI

Interview Q&A

35+ Account Executive Interview Questions 2026 (Enterprise Sales) | LastRound AI

Interview Q&A

30+ Blockchain Developer Interview Questions & Answers 2026 (Smart Contracts & Web3) | LastRound AI

Interview Q&A

35+ Business Analyst Interview Questions That Actually Matter (2026 Guide) | LastRound AI